by jeff

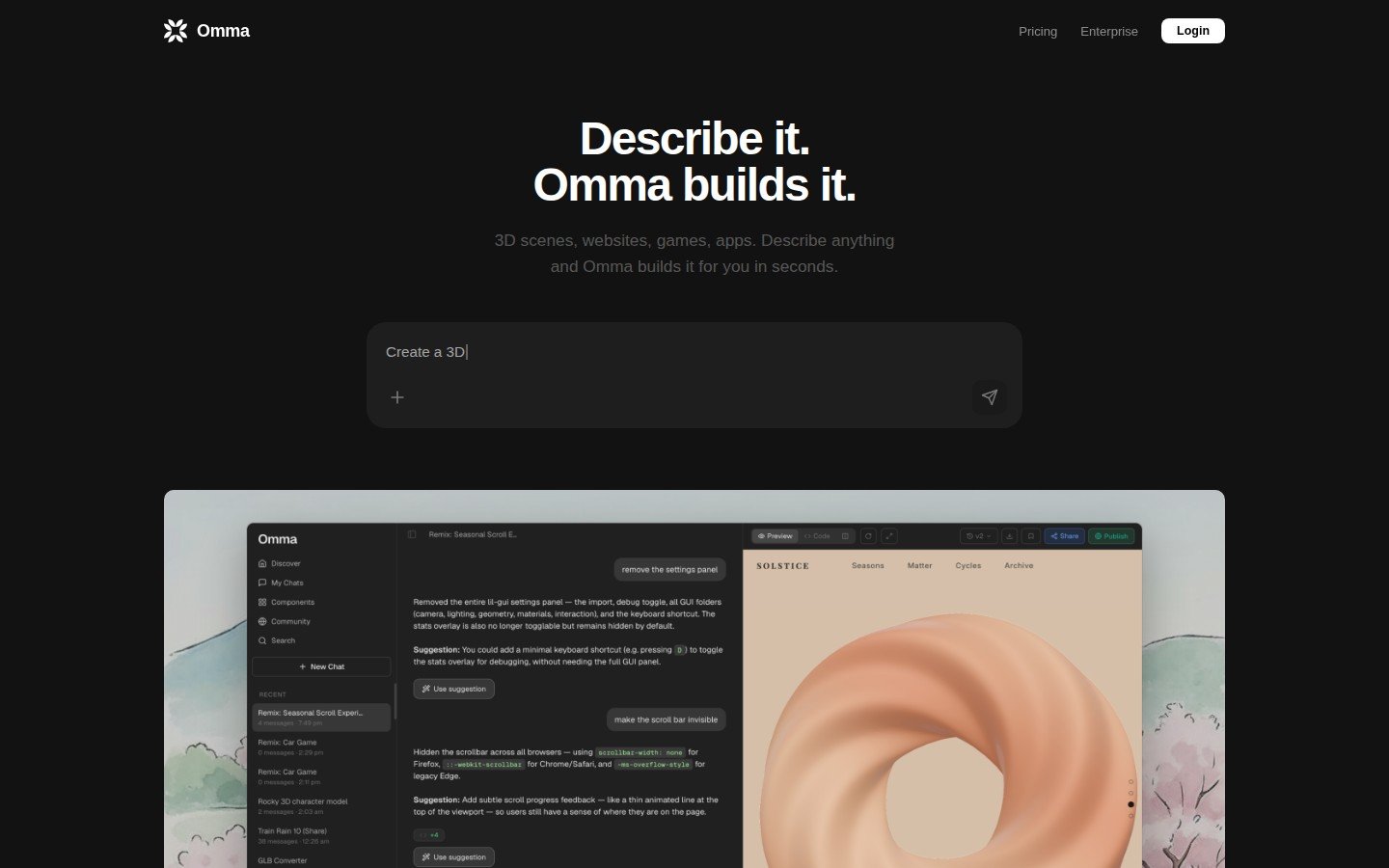

Spline's Omma AI canvas generates production-ready 3D websites, motion design, and interactive apps from text prompts with no design background required.

Spline launched Omma on March 24, 2026. The platform is used by over three million designers, including teams at Google, Datadog, Robinhood, and UPS. The Omma AI canvas takes natural language input and produces fully interactive web experiences. Those experiences combine 3D scenes, motion design, animation, and functional UI in one tool. The output is production-ready and deployable, not just a prototype.

The system runs multiple AI agents in parallel. One handles code generation via large language models. A second manages 3D mesh creation. A third generates images. All three work simultaneously from a single text prompt. Users type a command like /3d to trigger 3D model creation. The model gets placed into a scene automatically, with the resulting GLB file compressed and optimized for the web. Every element stays editable through Spline's visual tools after generation.

Omma AI Canvas: From Prompt to Production

The goal of Omma AI canvas is to eliminate the prototype-to-developer handoff. Spline CEO Alejandro Leon framed it as flexibility for teams that need speed without sacrificing control. Exports target web, mobile, and XR devices. The platform handles custom domain assignment and direct deployment to production. No prior design experience is needed to ship a finished interactive experience.

Pricing starts at $29 per month for the Professional plan. Credits are available for individual purchase. An Enterprise tier serves larger teams. Spline has raised $32 million to date from investors including Third Point Ventures, Gradient Ventures, and Y Combinator. The company was founded in 2020. It now ships three products: the core Spline editor for real-time 3D, Hana for 2D motion design, and the new Omma AI canvas.

Omma AI Canvas in the Competitive Landscape

Tools like Vercel's v0, Bolt, and Lovable generate web UI from text prompts. Most produce flat, component-based interfaces. The Omma AI canvas differs by combining 3D generation, image generation, and coded interactivity in one canvas. That positions it in a space between design-heavy 3D tools and code-forward web generators. For motion designers wanting a faster path to shipping interactive work, it reduces pipeline complexity directly.

How the Omma AI canvas performs at scale is still an open question. Complex 3D scenes will test the multi-agent system in ways the launch demo cannot. That demo runs 24 seconds and shows clean, immediate results. Production use cases will reveal more. The ambition is clear: a single designer with a text prompt delivering what once required a full team. The canvas is live now at omma.build.