by kai

AI agent orchestration is now the designer’s core skill. Shipping three apps with AI in 2025 revealed that intent and systems thinking beat writing code.

The barrier between design and engineering was never really about creativity or intelligence. It was about syntax. Learning to write code takes years, and by the time the syntax sticks, the framework has changed. Most designers stayed on their side of the divide — not because they lacked the ability to build, but because the cost of entry was too high. Benhur Senabathi, a designer who shipped three apps in 2025 using AI coding tools, argues that this divide has collapsed. And the designers who adapt fastest are the ones who already think in systems.

That collapse happened because AI agent orchestration removed the translation layer. Designers have always understood how software should work. The hard part was expressing that understanding as executable code. With tools like Claude Code and Cursor, that expression now happens in plain language. Senabathi built over 15 working prototypes in a single year using a basic Swift foundation he had learned three years earlier — and used that knowledge perhaps five percent of the time. The rest was orchestration: defining intent, structuring context, and guiding agents through complex problem spaces.

Why AI Agent Orchestration Fits the Design Mindset

Design training prepares people for AI agent orchestration better than most realize. Defining outcomes clearly, anticipating edge cases, communicating intent without shared context — these are the daily tools of any working designer. They are also the exact skills that determine whether an AI agent produces something useful or something generic. Weak prompts produce weak outputs. Vague intent produces buggy prototypes. Designers who bring specificity, user empathy, and documented edge cases to their AI interactions consistently get better results than those who treat the agent as an autocomplete tool.

What AI cannot do is understand the user. It has no access to research sessions, no sense of which error states matter, no awareness of regulatory constraints or the emotional tone a product needs to carry. Those gaps are not bugs in the system — they are the designer’s domain. The work has not disappeared. It has shifted upstream. AI agent orchestration means that the quality of what gets built is now directly tied to the quality of the design intent that precedes it.

Four Phases of AI Agent Orchestration in Practice

Senabathi describes his learning arc in four phases. The first was accepting everything the agent produced — copy-pasting outputs, watching things break, moving on. This phase fails because treating AI like a handoff partner produces generic results. The shift came when he started treating each prompt as a collaboration: sharing not just what he wanted built, but why, and how it should feel to the user.

The second phase was learning to debug through conversation. Instead of saying “fix this,” he learned to describe expected behavior, actual behavior, and his intent for the feature. He drew connection flows in Figma, wrote steps in a notebook, then returned to the agent with three distinct user scenarios — not a bug report. Scenario three worked. That discipline — forming a hypothesis before prompting — is the same discipline designers use in usability testing.

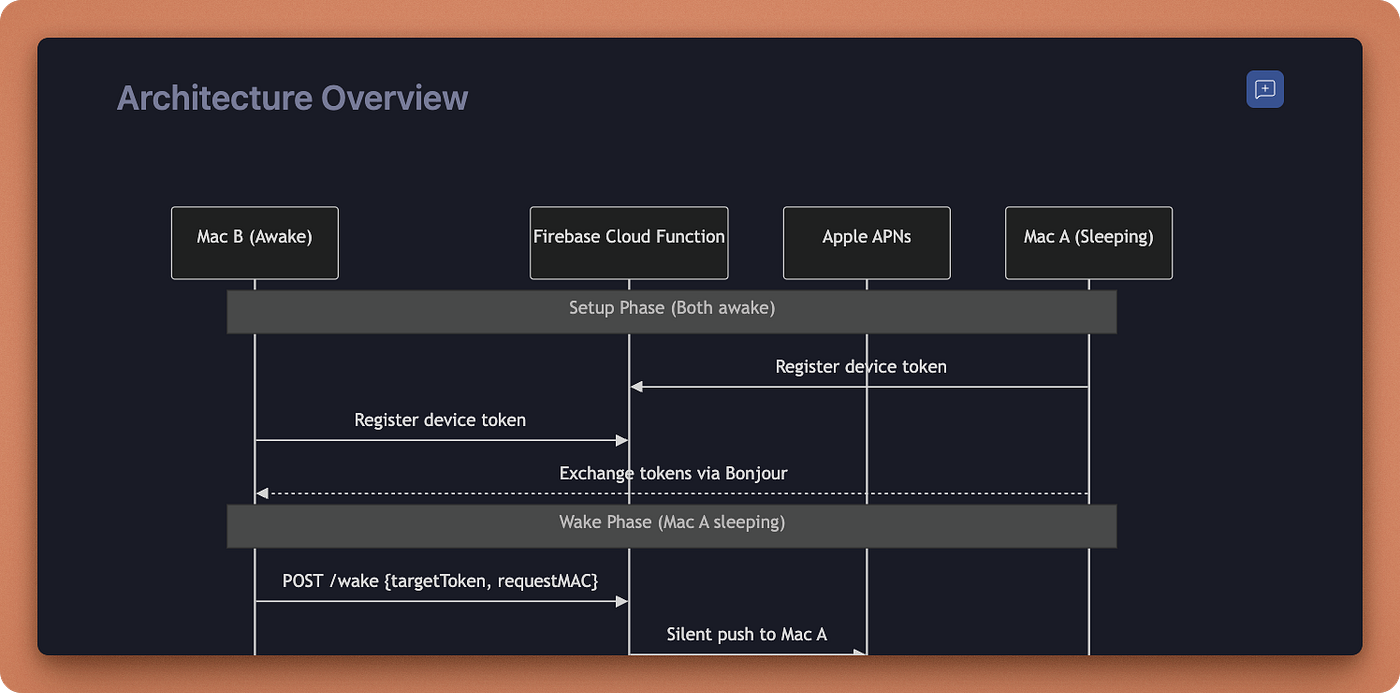

Phase three was systems thinking: treating every prompt as part of a larger architecture, not an isolated fix. This is where tools like Figma’s dev mode MCP and detailed annotation layers became essential. Giving agents structured documentation — architecture diagrams, design system definitions, user journey maps — tightened the gap between design intent and built output. An ASCII diagram of the data model, generated by the AI itself, revealed edge cases that neither the designer nor the agent had considered. Phase four was knowing when to stop: shipping the MVP, gathering real usage data, then returning to the agent with actual context instead of hypothetical optimisations.

What AI Agent Orchestration Means for Design Teams

The team-level implications of AI agent orchestration run deeper than individual productivity. Traditionally, the “what” of design gets compromised by the “how” of engineering constraints. When building something requires an extensive refactor, scope narrows. AI-native teams can delay or avoid that negotiation entirely. When implementation speed increases, the quality of intent becomes the primary constraint — and that is exactly where design adds value.

Designers who produce working prototypes rather than static mockups change the handoff conversation. Engineering receives a functional proof of concept instead of a vision that may not survive contact with the codebase. The gap between design intent and shipped product shrinks. Design moves upstream. And teams that invest in design system documentation, annotation practices, and structured agent context will move faster than those that do not.

AI agent orchestration does not ask designers to become engineers. It asks them to be more precise about what they already do: define outcomes, think in systems, and communicate intent with enough clarity that the work can be executed without them in the room.