by jeff

Thesys and its C1 API are redefining how AI products look and behave, turning raw LLM outputs into live generative UI components that adapt in real time.

Most AI interfaces today have the same problem. The model is smart. The output is useful. But it all arrives as text — dense, linear, hard to scan. A user asks for a financial breakdown and gets three paragraphs. They ask for a product comparison and get a list. The interface has not caught up to what the model can do.

That gap is exactly what Thesys is building around. Founded in San Francisco in 2024 by Rabi Shankar Guha and Parikshit Deshmukh, the company describes itself as a generative UI company — and that phrase is doing more work than it might first appear to do.

What Generative UI Actually Means

Generative UI is not a tool that helps designers draw faster. It is not a Figma plugin or a code assistant. The distinction matters: generative UI operates at runtime, not at design time. It does not produce a static screen that gets handed off. It produces the interface on the fly, in response to what a user actually does and asks.

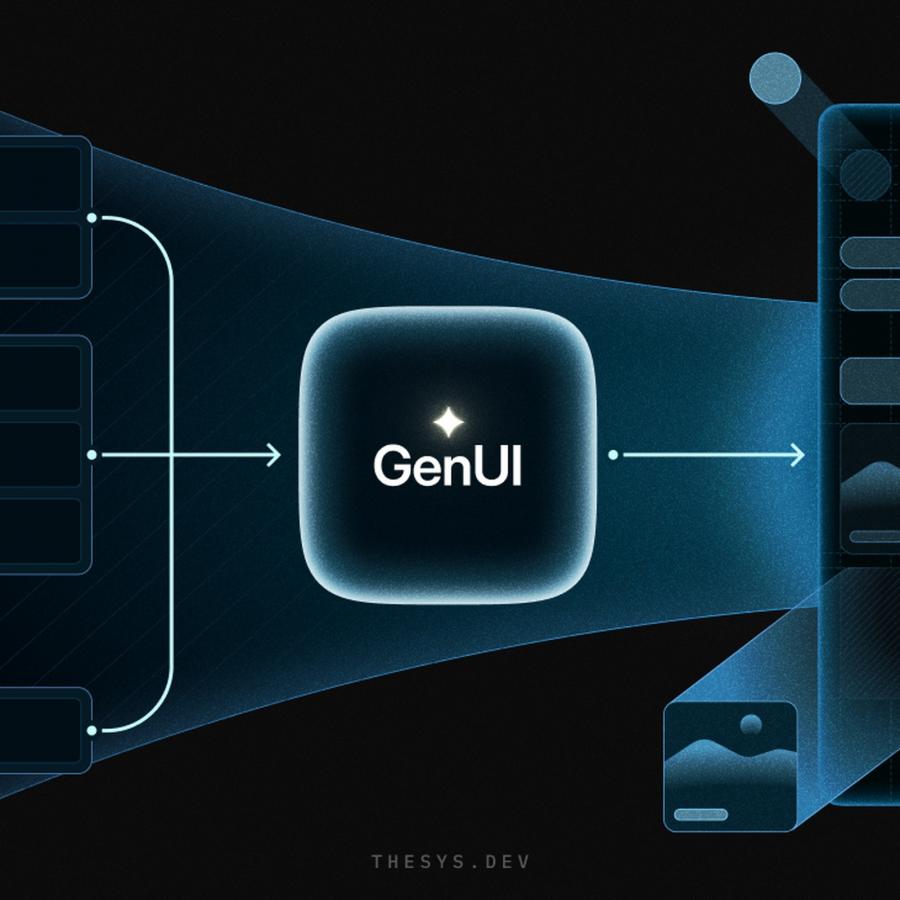

The architecture is straightforward in principle. A user sends a prompt. Instead of an LLM responding with plain text, the system interprets that intent, consults a component library and design rules, and assembles a live interface — a chart, a form, a data card, a table — that renders directly in the application. The interface is the response.

Thesys illustrates this clearly in their architecture diagrams. On one side: UI library, UX guidelines, user intent, context, preferences, and data. In the center: the GenUI engine. On the other side: a rendered, interactive interface. The diagram reads like a pipeline because that is exactly what it is — a production-grade middleware layer that sits between a language model and a user's screen.

The C1 API and Generative UI in Practice

Thesys's core product is C1 — an API middleware that augments LLM responses to output structured UI components instead of text. It uses an OpenAI-compatible endpoint, which means teams can adopt it without rewriting their backend. The C1 React SDK renders those components on the client side, handling streaming, state management, and interactivity out of the box.

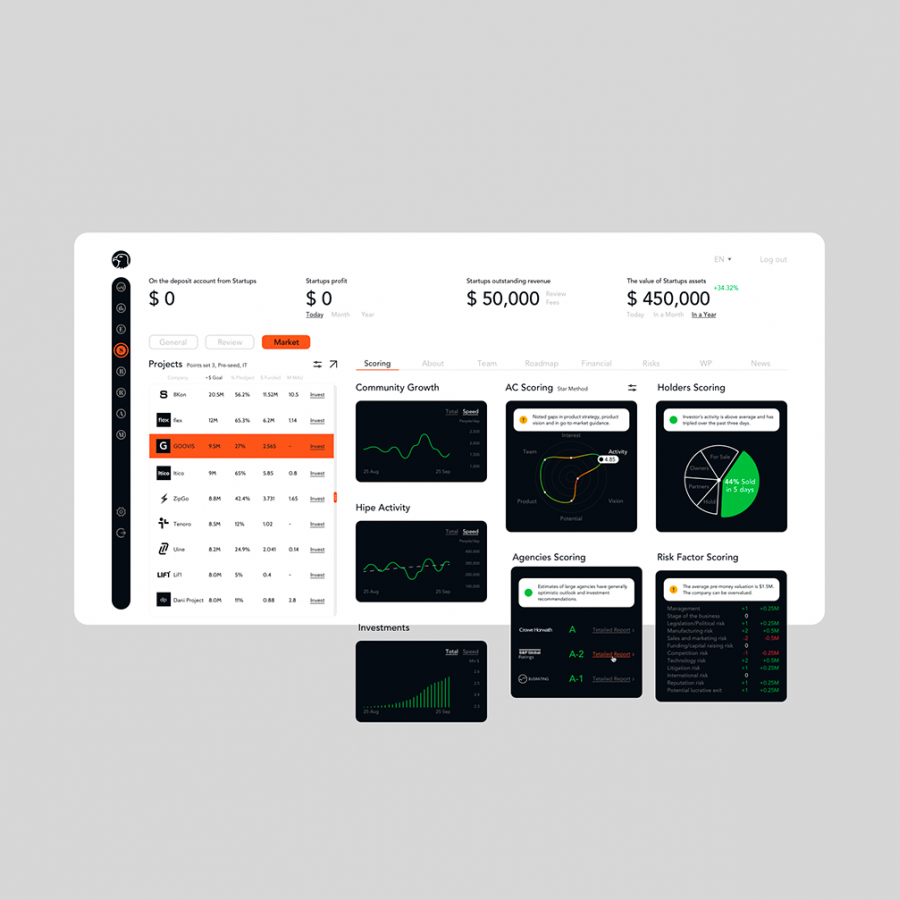

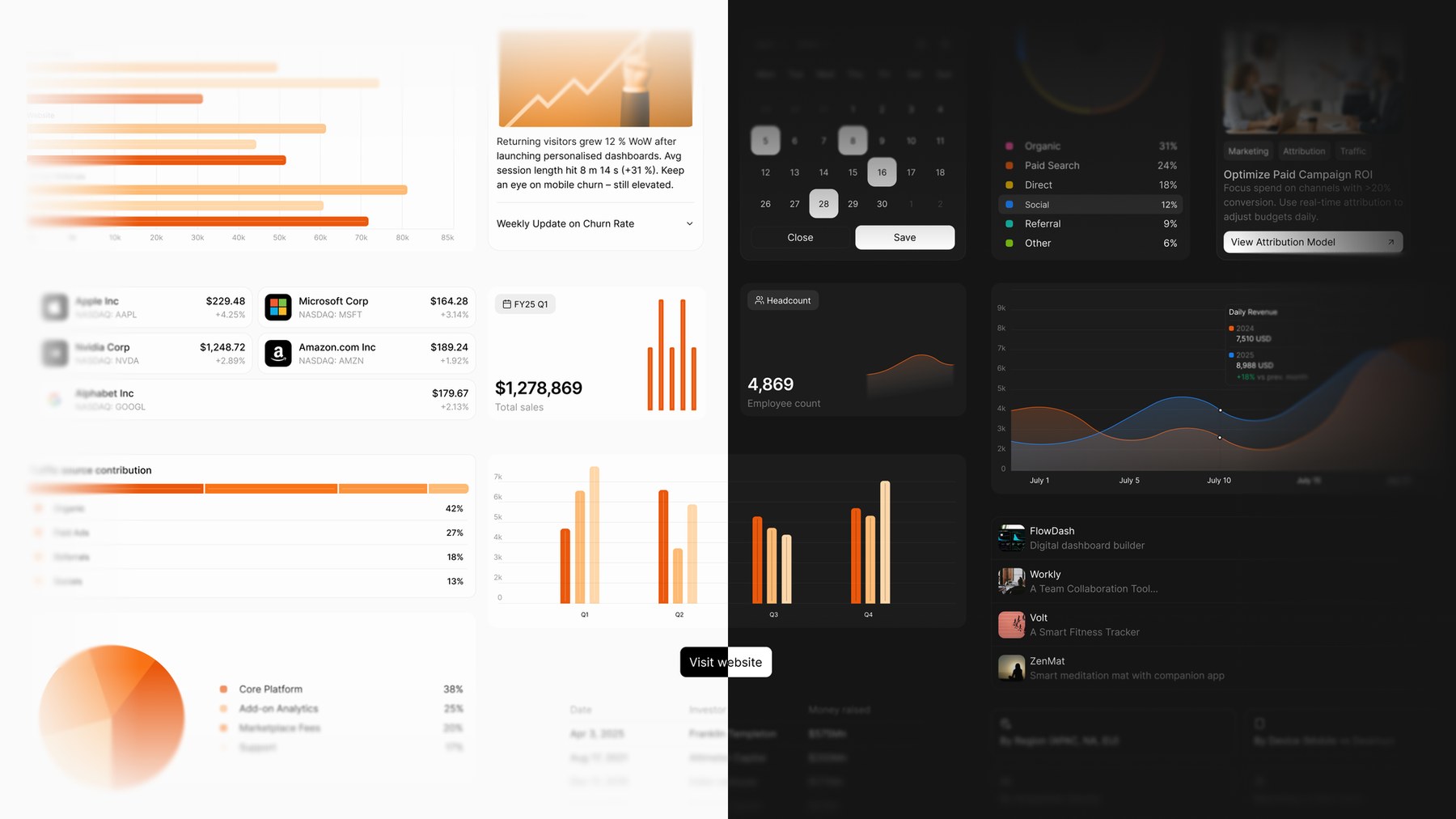

The component vocabulary covers the most common data patterns: charts, tables, forms, cards, slides, and reports. These are built on top of Crayon — Thesys's open-source React design system, itself built on Radix UI primitives and shadcn/ui patterns. The Crayon dashboard visuals show what this looks like in practice: warm orange financial charts layered against dark analytics panels, typography-forward KPI callouts, and area graphs that communicate trend data without requiring the user to read a sentence.

The personalization story is equally concrete. One of the key Thesys visual assets shows four users — each assigned a distinct color identity (teal, green, purple, blue) — with four different rendered interfaces. Same application. Same underlying model. Four different layouts, each assembled to match a different context and preference profile. That is the core promise of generative UI at scale: not four hand-crafted variants, but one system that generates the right interface for whoever is using it.

Why This Matters for Designers

The shift in how designers work within this paradigm is real. The job is no longer to design every screen. It is to define the vocabulary of components — the approved toolkit — and to set the rules by which the AI can compose them. Designers become the curators of the system, not the authors of every state.

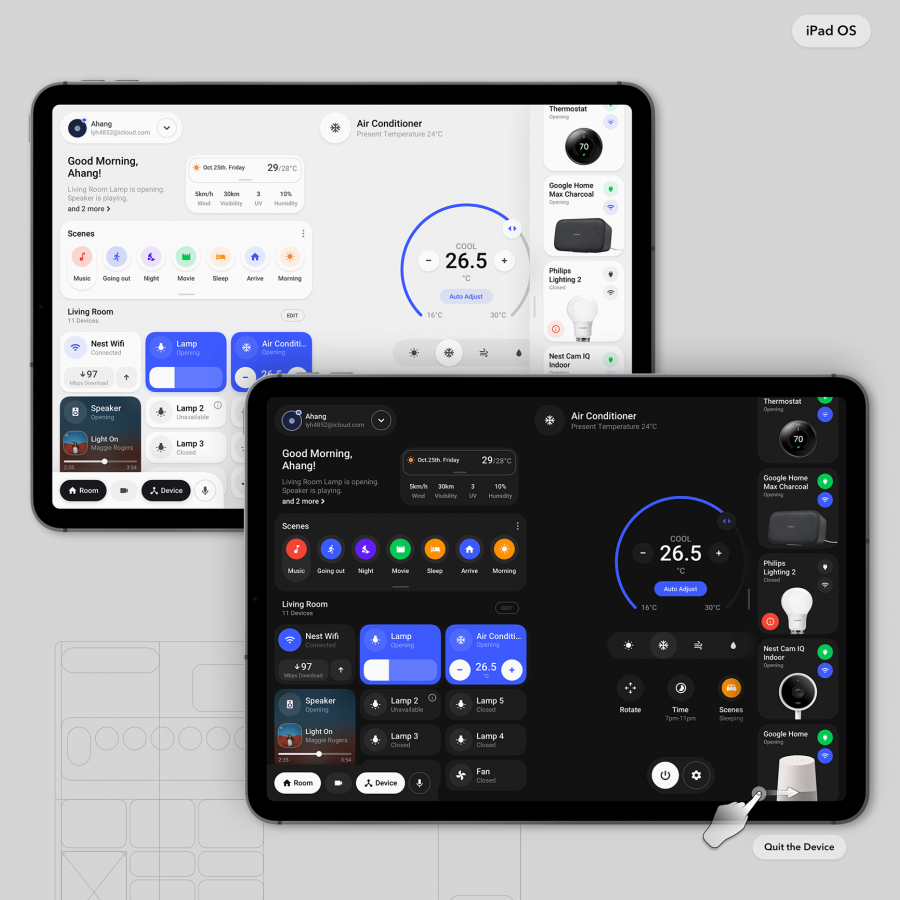

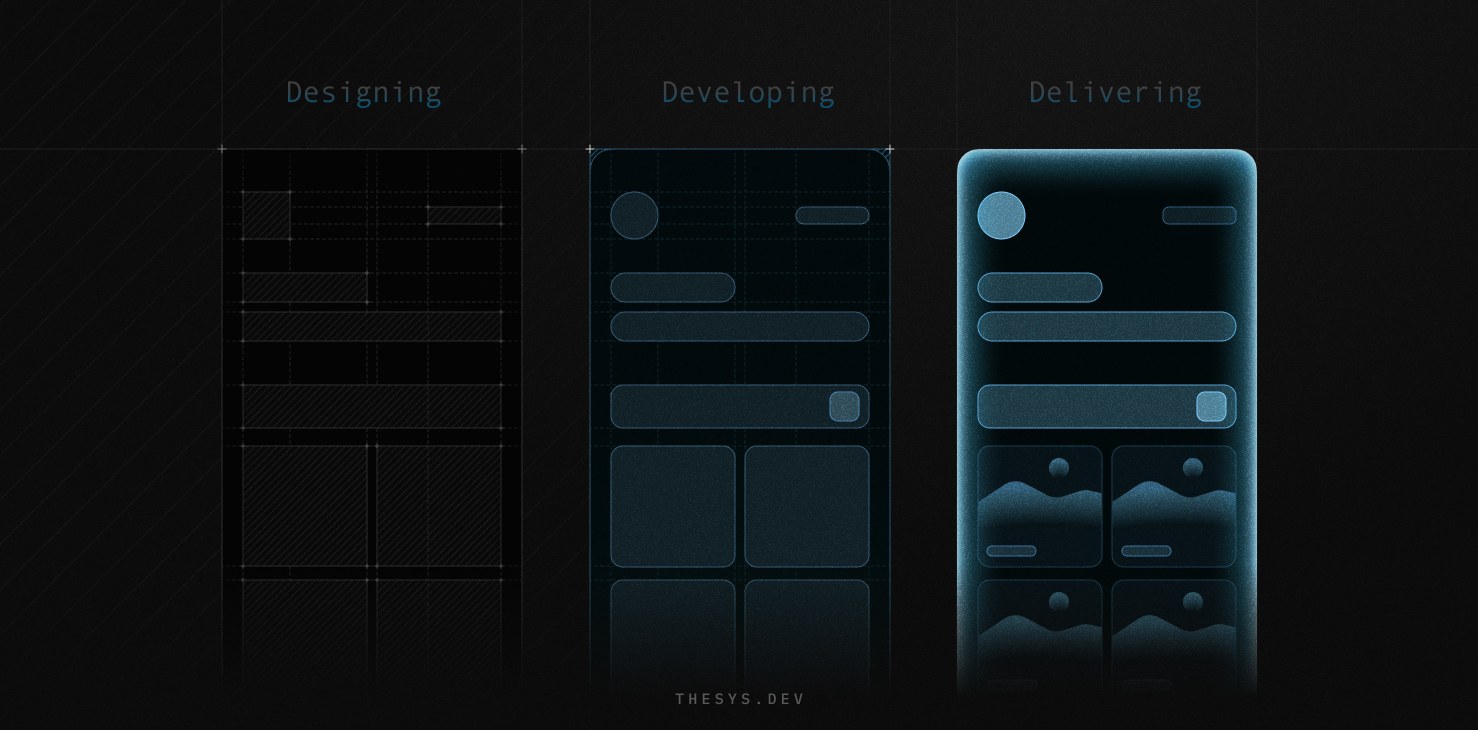

The Thesys workflow diagrams frame this as three stages: Designing, Developing, Delivering. Each stage is shown as a progressively illuminated wireframe — from a flat dark sketch to a glowing, rendered interface. The implication is that the same component survives all three phases intact. The handoff friction disappears not because developers got faster, but because the system generates the implemented version directly from the design rules.

For developers, the change is also significant. The work shifts from writing repetitive UI code to orchestrating context — deciding what data to pass in, which components to permit, and how to handle the interactive callbacks when users engage with what the model generates. The generative UI approach positions Thesys's C1 not as a convenience layer but as a different model of how frontend software gets built.

More than 300 teams are already using Thesys tools to build and deploy adaptive AI interfaces. The generative UI category is maturing quickly, with Google's Gemini work and competitive platforms entering the space. Thesys is betting that infrastructure — the API, the design system, the rendering SDK — is where the durable value lives, not in the model itself.